Just before Christmas I found myself glued to this year’s BBC Reith Lectures on living with artificial intelligence, delivered by Stuart Russell. I’ve worked with AI for all of my professional career in the shape of the search and social media content algorithms of Silicon Valley. But, Professor Russell’s lecture series painted a terrifying picture of how much further artificial intelligence has now penetrated all of our lives, and of how much more controlling it could become.

The rapid advance of artificial intelligence

Professor Russell’s first lecture took us through a quick summary of how artificial intelligence has evolved over the last century, from Arthur Samuel’s draughts-playing computer (which proved on television in 1956 that it had learned to beat its creator); to Ernst Dickmanns’ self-driving Mercedes, tested on an (albeit closed) autobahn as long ago as 1987.

But the real acceleration in artificial intelligence has been in the past decade, as machine learning systems have evolved to recognise human speech, objects, even faces. Today, AI is used in everything from search engines to recruitment to autonomous delivery planes.

As artificial intelligence has developed, so too have questions about how we will live with it. As Russell pointed out, these questions have been asked not just in response to Blade Runner-type visions, but were posited as far back as the 1690s when philosopher Francis Bacon wrote: “The mechanical arts may be turned either way, and serve as well for the cure as for the hurt.”

Killer robots for sale

Any discussion about the future of artificial intelligence inevitably turns to lethal autonomous weapon systems, or what have been dubbed ‘killer robots’ and Russell’s second, most terrifying lecture, was devoted to these.

“We could have small, lethal autonomous weapons costing between five and $10 each and if you allow those to be manufactured in large quantities, then it’s like selling nuclear weapons in Tesco. That’s not a future that makes sense to me.”

Stuart Russell

Weapons that locate, select, and engage human targets without human supervision are already available for use in warfare, so Russell asked what role will AI play in the future of military conflict. He used the lecture to call upon the upcoming UN Review Conference on Certain Conventional Weapons (CCW) to start negotiations to regulate autonomous weapons and to draw a legal and moral line against machines killing people.

“There are 8 billion people wondering why you cannot give them some protection against being hunted down and killed by robots.”

Stuart Russell to the UN 6th Review on CCW

Despite the majority of states calling for the negotiation of a legally binding instrument to address the risks posed by autonomous weapons, the last day of the CCW saw a small group of states – including the UK, US, India and Russia (all of whom are already developing autonomous weapons) blocking progress towards regulation.

AI and the future of work

Russell’s lecture on AI and the impact on work and the economy was the most fascinating, posing as it did big questions about the meaning of work, life, purpose and the role of humanity itself.

Optimistic visions about what artificial intelligence could do for our work lives frequently focus on us all being released from the drudgery of menial and repetitive work, which is taken over by machines. Pessimistic forecasts see us all out of work as a result, with wealth and power increasingly focused with those that control the machines. A situation not dissimilar to that of today’s Big Tech hegemony.

Russell tried to untangle these competing predictions and to pinpoint exactly what the comparative advantages that humans may retain over machines. Interestingly he suggested, after decades focusing on the critical importance of STEM in education, this may lead to a need to greater investment in the humanities and the arts. He also suggested that an emphasis on uniquely human qualities (caring, empathy etc) could lead to increased status and pay for professions based on interpersonal services, currently undervalued in society. AI could identify you have cancer, for example, but would you want to be told you had it by a machine?

Stopping your robot from killing your cat

Perhaps the most memorable illustration of how a poorly-designed general purpose AI could go badly wrong, was the picture Russell drew of life with a domestic robot. “Imagine you have a domestic robot. It’s at home looking after the kids and the kids have had their dinner but are still hungry. It looks in the fridge and there’s not much left to eat. The robot is wondering what to do, then it sees the family cat. You can imagine what might happen next…”

As Russell says “It’s a misunderstanding of human values, it’s not understanding that the sentimental value of the cat is much greater than the nutritional value.”

Russell’s answer to predicaments like this, and to the over-arching big question of how humans retain control of artificial intelligence, is that all AI must be programmed with an understanding that it doesn’t know what humans would want it to do.

“We have to build AI systems that know they don’t know the true objective, even though it’s what they must pursue. The robot defers to the human.”

Stuart Russell

His is but one possible solution currently being debated in academic and corporate circles on how to harness the huge power of artificial intelligence whilst ensuring it does no harm. The aim of all such debates Russell says, should be on building trust in AI in the public (who have seen plenty of sci-fi films of robots running amok), and to agree where AI should, and shouldn’t, be used.

As Russell says, we do already have a precedent for all of this with nuclear weapons; “Nuclear global cooperation provides the model for AI cooperation.” Given some of the scenarios conjured up in the Reith Lectures he delivered, let’s fervently hope the global community can get their act together before someone decides to see just what a batch of those $5 lethal autonomous weapons can do.

Read more on living with algorithms

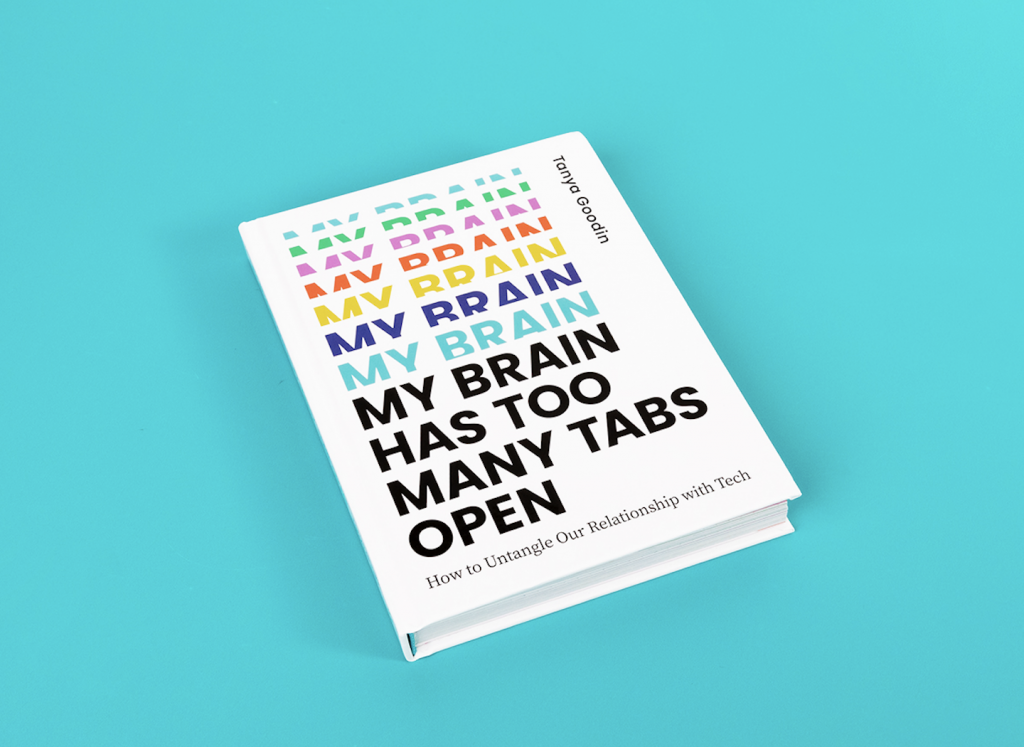

If you want to find out more about living with the artificial intelligence algorithms of Big Tech, pick up a copy of my new book.